A couple of years ago I was tasked with writing a MAC decoder for HSDPA packets. Writing the decoder wasn’t that difficult but one of the requirements was to make it robust. What does it mean to make a decoder robust? In the simplest sense,

- It should not crash even when given an input of rubbish.

- It should identify errors and inform the user.

- It should do a best-effort job of decoding as much of the input as makes sense.

- It should exit gracefully even when configuration of HS MAC and PHY are null or invalid.

In the adopted implementation, the decoder would parse the input bit stream and decode it on the fly. It will flag errors as it encounters them. It will continue to decode as much of the input stream as possible and flag multiple errors when encountered. Naturally, to perform decoding of HSDPA packets, HSDPA configuration at MAC is a necessary control input to the decoder. In addition, we wanted to make the output user-friendly. We wanted to map the data stream to HS-SCCH, HS-DPCCH and HS-PDSCH configuration as well.

Once the decoder was coded, the next important task was to test it. Robustness of design is only as good as the tests on the design. It is customary to perform smoke tests for typical scenarios. Then there are boundary value tests. Then there are stress tests which are applicable more at the system level than at the module level. There are also performance tests, which was not a major concern for our platform.

Because the decoder parses configuration as well, it was important that the decoder considers the entire vector space of configuration as well.

The following possible decoding errors where identified:

- genericError

- hsDpcchConfigurationNull

- hsPdschConfigurationNull

- hsScchChannelInvalid

- hsScchConfigurationNull

- macConfigurationNull

- numberOfMacDPdusOutofRange

- queueIdentifierInvalid

- selectedTfriIndexInvalid

- sidInvalid

- subFrameNumberOutofRange

- tooManySidFields

- transportBlockSizeIndexInvalid

- transportBlockSizeIndexOutofRange

- unexpectedEndOfStream

- zeroMacDPdus

- zeroSizedMacDPdus

Arriving at these possibilities requires a little bit of analysis of the Mac-hs PDU structure. One must look at all the fields, the range of valid values and the dependencies from one field to another. One must also look at all these in relation to the constraints imposed by the configuration.

Unit tests were created using BOOST. In particular, BOOST_AUTO_UNIT_TEST was used. This was already the case with most of the modules in the system. It’s so easy to use (like JUnit of Java) that it encourages developers to test their code as much as possible before releasing it for integration. If bugs should still remain undiscovered or creep in due to undocumented changes to interfaces, these unit tests can be expanded easily to test the bug fix. For some critical modules, we also had the practice of running these unit tests as part of the build process. This works well as an automated means of module verification even when the system is built on a different platform.

Below is a short list of tests created for the decoder:

- allZeroData

- hsPdschBasic

- macDMultiplexing

- multipleSID

- multiplexingWithCtField

- nonZeroDeltaPowerRcvNackReTx

- nonZeroQidScch

- nonZeroSubFrameCqiTsnHarqNewData

- nonZeroTfri16Qam

- nullConfiguration

- randomData

It will be apparent that tests are not named as “test1”, “test2” and so forth. Just as function names and variable names ought to be descriptive, test names should indicate the purpose they serve. Note that each of the above tests can have many variations both in the encoded data input stream and the configuration of MAC and PHY. A test matrix is called for in these situations to figure out exactly what needs to be tested. However, when testing for robustness it makes sense to test each point of the matrix. Where the inputs are valid, decoding should be correct. Where they are invalid, expected errors should be captured.

In particular, let us consider the test name “randomData”. This runs an input stream of randomly generated bits (the stream itself is of random length) through the decoder. It does this for each possible configuration of MAC and PHY. The test is to see that the decoder does not crash. Randomness does not guarantee that there will be an error but it does make a valid test to ensure the decoder does not crash.

While specific tests gave me a great deal of confidence that the decoder worked correctly, it did not give me the same confidence about its robustness. It was only after the random data test that I discovered a few more bugs, fixed them and went a long way in making the decoder robust.

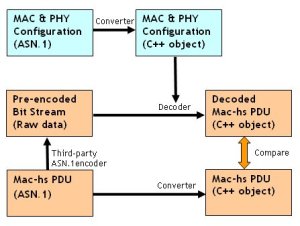

I will conclude with a brief insight into the data flow during testing. This is illustrated in Figure 1. Let us note that,

- ASN.1 is used as the input for all unit tests. ASN.1 is widely used in protocol engineering. It is the standard in 3GPP. It makes sense to use an input format that easy to read, reuse and maintain. Available tools (such as the already tested Converter) can be reused with confidence.

- Converters are used to represent ASN.1 content as C++ objects.

- Comparison between decoded PDU and expected PDU is done using C++ objects. A comparison operator can do this elegantly.

- A third-party ASN.1 encoder is used to generate the encoded PDUs. This gives independence from the unit under test. An in-house encoder would not do. A bug in the latter could also be present in the decoder invalidating the test procedure.

- It is important that every aspect of this test framework has already been tested in its own unit test framework. In this example, we should have prior confidence about the Converter and the Compare operator.

Hi Arvind , Good to discover your blog, will add ur feed right away.

Sir,

Do do feel that test for robustness is one of the mandatory requirements in most of the projects that I have done under your guidance. Does our Requirement Documents need an explicit mention of term “Robustness”?

Thanks for the fantastic blog.